Available for hire

Jeffrey Lowe

Full-Stack & Python Developer

I designed, shipped, and maintain a production Django web app end to end, solo. Plus a companion Python bot service and a React Native mobile app, both in active development. Self-taught and looking for my first developer role.

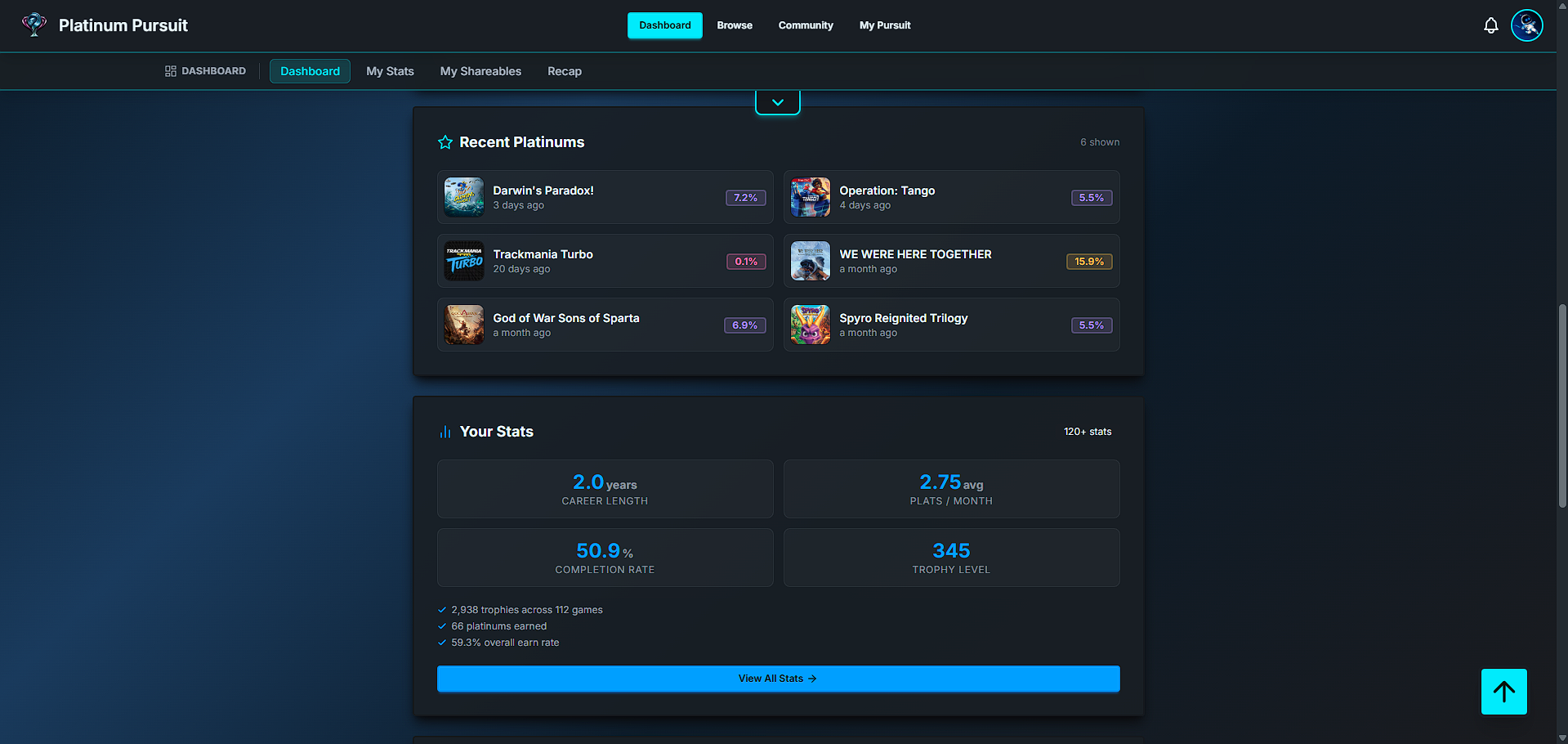

PlatPursuit

PlayStation trophy tracking, end to end. Production Django web app with active users, payment flows, and ongoing maintenance.

What I built

- Schema and ORM

30+ Django model schema covering games, trophies, badges, dashboards, and payments. Includes a

Concept.absorb()migration method that safely merges 15+ related models during sync reassignments, preserving relational integrity when PSN reassigns concepts upstream. - Third-party API integration

Six-strategy IGDB matching pipeline with retry-on-401, no-match persistence, and platform-overlap enforced as a hard requirement. Handles cover art, metadata, and Tier-2 stats end to end across thousands of games.

- Frontend and responsive design

Customizable 41-module dashboard with a documented three-layout system (375 / 768 / 1024 px). Mobile-first, dark-theme-aware. The design system is the canonical reference for every page in the app.

- Database performance

Diagnosed and fixed a production OOM caused by

select_relatedeagerly fetching a ~30 KB JSON column across every cover-art render. Repair was targeted.defer()chains paired withselect_related, with explicit.only()opt-in only where the JSON was actually needed. Also shipsSubquery/OuterRefworkarounds for Django's.update()SET-clause limits. - Process maturity

Every system has a doc with a mandatory Gotchas and Pitfalls section. When behavior changes, the relevant doc updates in the same branch. Three-phase quality workflow: plan with a reuse check, build with inline audits, polish before merge.

The signature story

Designing TokenKeeper: a custom worker pool for Sony's PSN API

Sony doesn't expose a public OAuth flow for third parties. PlatPursuit authenticates with PSN using NPSSO cookies, which mint short-lived access tokens whose state needs to flow cleanly through every checkpoint of a sync pipeline: queue dispatcher, per-token worker, rate limiter, response parser, DB writer. Celery is closed off in that respect; every task hand-off goes through pickle byte-stream serialization, which forces lossy conversion and implicit assumptions at each step. TokenKeeper keeps that state in-process and JSON-shaped, so each piece of the pipeline reads exactly what the previous one wrote, no decoding ambiguity.

So I built it: one long-lived Python process, in-memory token pool, no broker.

TokenKeeper runs three worker threads per token group, pulling jobs from a five-tier Redis priority queue (orchestrator, high, medium, low, bulk). The bulk tier exists specifically so whale accounts with 5,000+ games can't starve normal users. Each token is wrapped in a TokenInstance dataclass tracking busy state (atomic Redis-based locking), expiry, an LRU cache of user lookups, and outbound IP for proxy routing. A health loop runs every 60 seconds: it refreshes any token within 5 minutes of expiring, publishes stats over Redis pub/sub for the dashboard, and runs a circuit breaker that trips on five PSN 5xx errors in a 60-second window. When PSN goes down, sync timestamps get backdated and writes pause cleanly until it recovers.

The hardest bug in this system wasn't conceptual; it was psnawp's default rate limiter, which uses a SQLite-backed bucket and produced "database is locked" errors under concurrent load. The fix was a small custom PSN client subclass that swaps in an in-memory bucket. A PSNManager facade hides all of this from the rest of the app: views, cron handlers, and API endpoints call assign_job() and never see the worker. One Python process owns the full PSN surface: auth, rate limiting, retries, priority queueing, and observability. No broker, no result backend, no separate worker pool to scale. The takeaway: when the obvious choice imposes a heavier operational burden than the workload justifies, building the right thing custom is often cheaper to operate and simpler to reason about. Especially when stateful auth sits at the core of the workload.

Stack

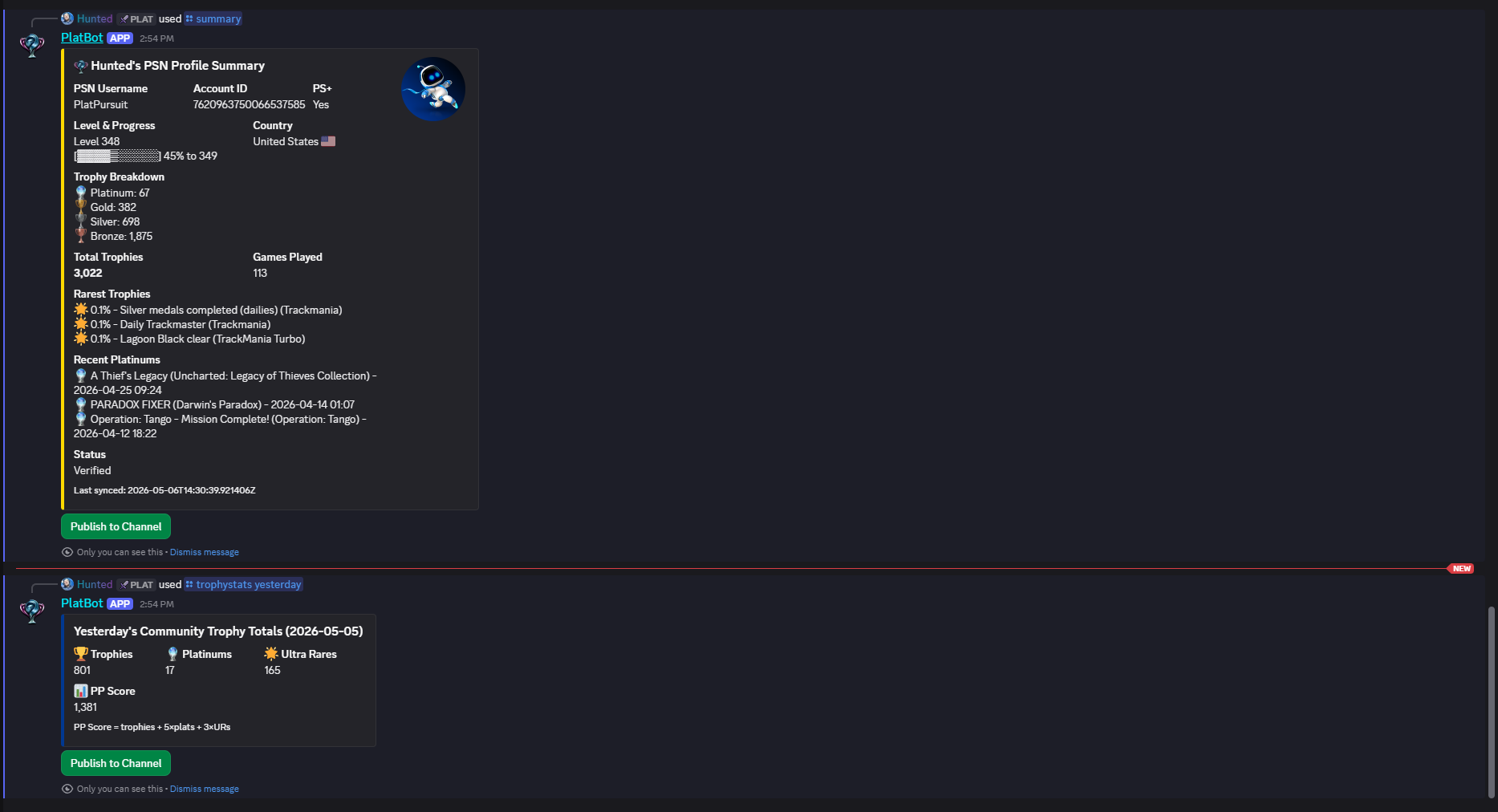

PlatBot

Companion Discord bot for the PlatPursuit ecosystem. discord.py and FastAPI under one asyncio event loop, with async workers for rate-limit-aware role syncing.

What I built

- Multi-framework architecture

discord.pyandFastAPIrun in the same asyncio event loop, coordinated viaasyncio.gather. FastAPI is embedded as an inbound webhook receiver, not a separate microservice; PlatPursuit pushes role updates over HTTP, the bot reflects them to Discord. One process, dual runtime, no broker, no IPC. - API contract design

Pydantic schemas at every boundary. The

RoleRequestmodel validates inbound webhooks; outbound calls to PlatPursuit's REST surface go through a persistentaiohttp.ClientSessionwith token auth headers attached, reused across all 14 commands and the worker pool. Twelve PlatPursuit endpoints consumed. - Async patterns

asyncio.Queuewith background workers for rate-limit-aware role operations. Deferred slash commands (interaction.response.defer) to respect Discord's 3-second interaction timeout. AsyncViewbutton callbacks for multi-step flows. Signal handlers (SIGTERM,SIGINT) cancel tasks in order and drain queues before close, so in-flight operations don't drop on deploy. - Discord rate-limit handling

Discord's role API caps at a small burst before returning

429. Workers detect the 429, read theRetry-Afterheader from the response (not a guess), sleep the exact interval requested, and retry up to five times before giving up. The webhook never blocks on this; it returns202 Acceptedand moves on. Pragmatic resilience, not a sophisticated library. - Feature breadth

14 slash commands covering account linking, trophy summaries, paginated trophy cases, role syncing, and community-wide stats with timezone-aware aggregation. Member event handlers for join welcomes and auto-unlinking on leave. Mod tooling for forced refreshes and badge audits. Each command in its own

discord.pyCog file.

The signature story

Embedding FastAPI inside discord.py's event loop

PlatPursuit needs to push role changes to Discord: badge unlocks, milestone achievements, premium tier upgrades. Discord's role API is aggressively rate-limited (small burst, then 429), so a synchronous webhook would either time out or fail under load. The cleanest version of this system is one HTTP boundary: PlatPursuit calls PlatBot, PlatBot reflects the change to Discord. The catch is that the bot is already speaking to Discord over a long-lived gateway connection, and that connection lives on an asyncio event loop. Adding a second process would duplicate the auth surface and force two deployments. Adding a thread for HTTP would fight the loop.

The fix is one event loop. FastAPI runs as an asyncio.create_task alongside bot.start(TOKEN), both gathered via asyncio.gather. There's no separate microservice, no broker, no IPC: PlatPursuit POSTs to /assign-role, FastAPI validates the payload against a Pydantic schema, drops the work onto an asyncio.Queue, and returns 202 Accepted immediately. Two background workers (assign + remove) drain the queues continuously. When Discord returns a 429, the worker reads the Retry-After header (not a guess), sleeps the requested interval, and retries up to five times before giving up. Outbound calls back to PlatPursuit's API reuse a persistent aiohttp.ClientSession with token auth attached, so every cog and worker shares a connection pool.

Single process, dual runtime. The webhook endpoint never blocks on Discord; the bot never blocks on HTTP; neither blocks on PlatPursuit's REST surface. Signal handlers (SIGTERM, SIGINT) cancel tasks in order and drain the queues before close, so role operations in flight don't get dropped on deploy. The takeaway: when two frameworks are both async and share a runtime model, embedding one inside the other is often cheaper than separating them. The contract is a Pydantic schema and a queue, not a service mesh.

Stack

How I work

Five things that show up in everything I ship.

Plan → Build → Polish

Every feature goes through three phases. Planning surfaces existing code that already solves the problem (the rule of three: no abstraction until three concrete uses exist). Build runs background audits on each completed chunk to catch quality drift while context is fresh. Polish reviews every changed file against the quality bar before merge. Each phase catches different categories of issue at the cheapest possible time.

Docs ship in the same branch as the code

PlatPursuit has 49 system docs, each with a mandatory Gotchas and Pitfalls section. When a system's behavior changes, its documentation updates in the same branch. Stale docs are worse than no docs. The discipline pays off three ways: future-me onboards faster, present-me explains decisions clearly, and the writing surfaces design problems before they ship.

AI tools are part of my workflow

Claude Code accelerates the build phase. Architecture, system design, and every decision about what to ship and what to defer are mine. The three-phase workflow above exists in part because the parts AI is good at sit between two phases that have to stay human: planning before, audit after. I review every line that ships, and I don't merge code I can't defend in an interview.

Search before you build

Before finalizing any plan, I search the codebase for utilities, helpers, and patterns that already cover the proposed work. If existing code covers the need, I reuse it. If it almost covers it, I extend it. New abstractions only get created when nothing suitable exists. Three similar lines is fine; speculative generalization for a future requirement that may never come is technical debt with extra steps.

Build what's needed, not what might be

Scope follows the task, not what the task might become. A bug fix doesn't need surrounding cleanups; a one-shot operation doesn't need a helper; new abstractions wait until three concrete uses exist. Unused code gets deleted, not deprecated or wrapped behind a flag. Validation lives at system boundaries (user input, external APIs); internal code is trusted to do what it says.

Let's talk

I'm actively looking for full-stack, backend, or Python developer roles. Email is the fastest way to reach me, and the resumes below are tailored to each track.